The New York Times opinion pages are often populated with some of the most annoying people on Earth making annoying arguments, annoyingly. Today, they published one of the most evil opinion pieces in recent memory: “Our Oppenheimer Moment: The Creation of A.I. Weapons,” by Alexander C. Karp, CEO of Palantir Technologies.

If you don’t know Palantir, I’m happy for you. In short, they develop data analysis software for the U.S. Department of Defense, U.S. Immigration and Customs Enforcement (ICE), and other American government agencies, foreign government organizations, and private contracts with Amazon Web Services and so on. The company was co-founded by billionaire Peter Thiel, an unseemly man who has, among other things, taken down Gawker, funded countless far-right and libertarian political candidates, and is probably injecting himself with young people’s blood to extend his life.

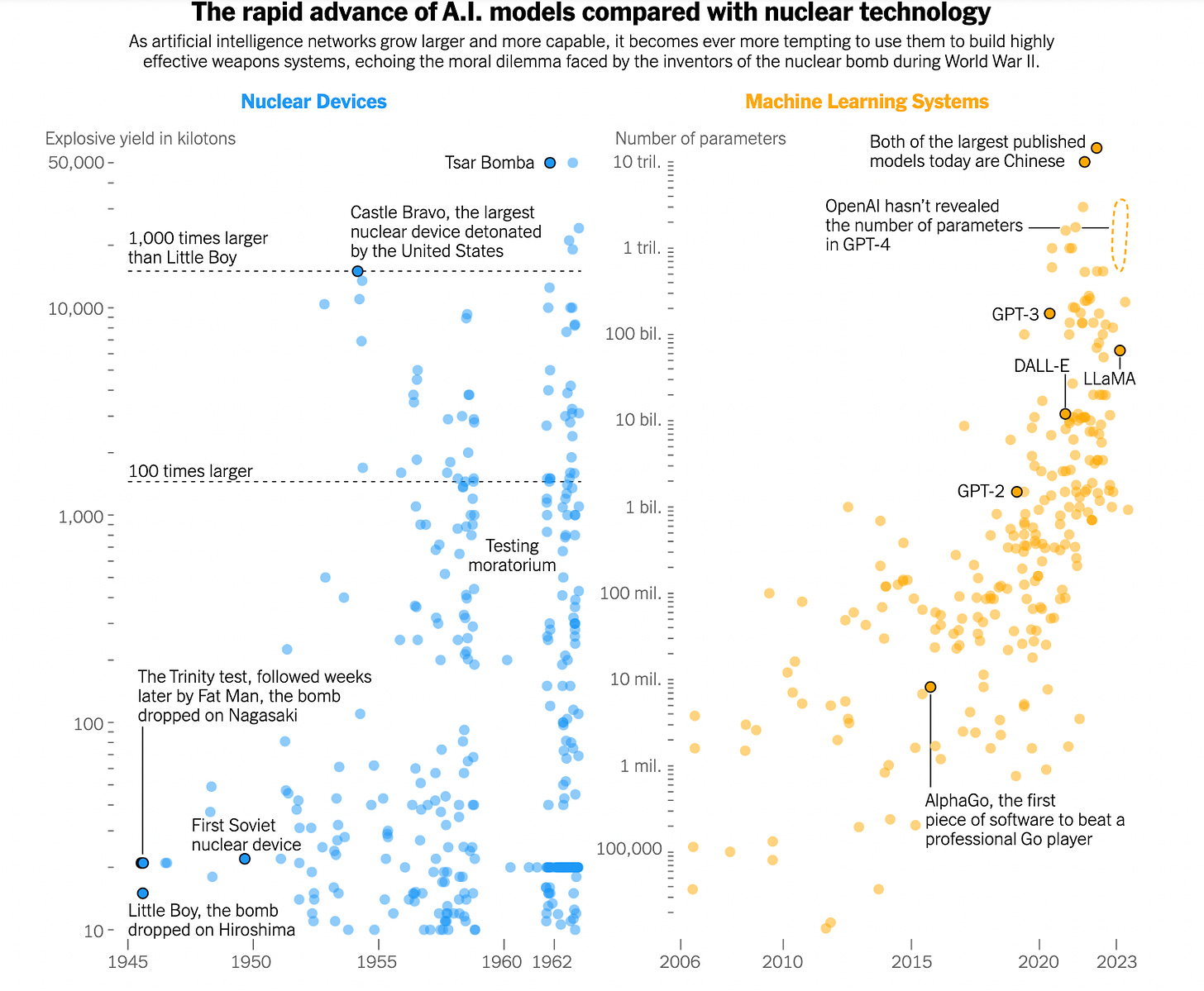

Karp begins his op-ed by situating where we now sit as AI advances rapidly in front of us, and that concerns about this growth “are not unjustified.” His real argument, though, is that calls to halt this development are “misguided,” in the name of geopolitical warfare. He makes the hilariously obvious and obscene comparison to J. Robert Oppenheimer and the development of the atomic bomb, in the sense that it had to be built because America knew Germany and Russia and who knows who else were doing the same thing. Amazingly, he goes on to quote game theorist Thomas Schelling, who argued in the midst of the Vietnam War that “The power to hurt is bargaining power. To exploit it is diplomacy — vicious diplomacy, but diplomacy.”

Karp’s point is that America’s enemies, specifically China, are developing this technology and will weaponize it, so we are required to do the same, and faster. “Our adversaries will not pause to indulge in theatrical debates about the merits of developing technologies with critical military and national security applications. They will proceed.” In other words, our adorable debates about AI ethics are getting in the way of a good old-fashioned arms race!

“Our hesitation, perceived or otherwise, to move forward with military applications of artificial intelligence will be punished.” Incredibly, he refers to the “end of history,” the (in)famous argument by Francis Fukuyama in the early 1990s that liberal democracy had succeeded in proving itself as the ideology to end all ideology, and that alternatives like communism had been defeated — the world would, soon enough, fully coalesce around democratic norms. The argument has been critiqued endlessly, from the left and the right, but Karp uses it as a cudgel against Western complacency, as if to say that because of how clear it now is that democracy has not won, we must be ready to beat down our opponents with force. “The ability of free and democratic societies to prevail requires something more than moral appeal. It requires hard power, and hard power in this century will be built on software.”

Karp concludes by referring to Albert Einstein’s letter to the president in 1939 about building a bomb: “It was the raw power and strategic potential of the bomb that prompted their call to action then. It is the far less visible but equally significant capabilities of these newest artificial intelligence technologies that should prompt swift action now.” Okay! Cool!

Listen, I’m not here to say how unbelievable it is that this stupefyingly evil man wrote a stunningly evil article. This is a man arguing that his company is crucial to the military industrial complex’s present and its future, and to do that, sure, he has to say that the atomic bomb and the Vietnam war were good, actually. I’m not even here to put shame on the Times for publishing this, because of course they did.

The article much more simply speaks to the eagerness with which corporate and government administrators are applying AI to our lives, from the way we work to weapons systems deciding whether or not to bomb our enemies. What’s useful about Karp’s argument, then, is that it provides a blunt view of how all this technological advancement is bound up in how a relatively small group of powerful people wish for it to be used. Read almost any commentary on AI and you will encounter someone briefly acknowledging the actual technical details of what it can do only to very quickly transition into a plea for how it should, or will, be applied.

I was talking with my dad yesterday about this, and found myself getting animated, because it’s quite funny for Karp to complain about the hesitation that our “theatrical debates” create at the same time that so much of our lives is being almost immediately turned over to AI at the first opportunity, regardless of if the technology warrants it, now or ever. Karp believes (or rather, it serves his financial interests to publicly argue) that we must continue building AI precisely because it has the potential to destroy humanity. He is willingly becoming Oppenheimer 2.0, with absolutely no sense of irony.

It’s also no accident that these people are counting on us getting sick and tired of talking and thinking about this. I know I am! This, too, serves their interests, because then they can continue signing weapons contracts and subtly moving the needle closer to destruction because it suits their bottom line. Again, blah blah abuse of power comes as no surprise etc, but it’s worth paying attention to the indelicate and frank way that this gets talked about in an effort to get us all to acquiesce to it. There is a throughline from those excited to implement AI into entry-level jobs to those excited to ignite a global AI arms race, whereby we barely have time to understand whether the technology itself is “good enough” by any stretch to merit any of its applications.

There’s just no time, we must build the bomb.

Ephemera

Christine H. Tran on the TikTok ‘NPC’ trend and what it has to do with new dynamics of work: “Like the NPCs of TikTok, Twitch gamers render their bodies as intimate interfaces. As reflected by one Twitch streamer’s advice for colleagues, it is not uncommon for game streamers to set a “Bathroom Break” and “Hydration Break” as “achievements” (or commands) that viewers can purchase for their streamer to perform on demand.”

Quinn Slobodian on how Saudi Arabia is planning to take control of this century through insane projects like NEOM: “We might not like its plans but Saudi Arabia, ironically a country which owes its wealth to oil, may be one of the few countries with the means and drive to plan for a post-carbon future. If it becomes a thriving exemplar of capitalism without democracy, the prospect of a Saudi century has consequences for us all.”

Tom Humberstone with a great web comic at The Nib about how we should all be Luddites.

If you have to read something about Elon rebranding Twitter, read Paris Marx.

Song Recommendation: “Talk to Death” by mui zyu