In his latest newsletter, excellent as always, Rob Horning writes:

To show cultural competency in the era of platforms, one must demonstrate a constant ability to invent marketing terms and slogans, to identify and delineate niche aesthetics, to hashtag-ify life in real time. We conform to algorithms, but not in the way a book like Kyle Chayka’s Filterworld suggests, by becoming boring. Instead we try to mimic their way of operating, learning to see the world in terms that can be recoded into a set of novel coinages — to see everything as potentially viral, given the right spin and seeded to the right sorts of audiences, and thus to see everyone in terms of demographics and fluid clusters of interests.

This is one of the most coherent arguments I’ve heard to date about what is happening to sociality in the era of platforms, and TikTok in particular. Too often, I think that we settle on a rather simple idea of people starting to act more like algorithms, to become more legible in algorithmic terms and therefore more easily sorted and more agreeable to circulation and virality. To be sure, in many ways this is a pretty accurate and recognizable rendering of online life and behaviour, as many of us — not just influencers — curate what we post according to what we think other people will like and share. Even if I or others harbour intense feelings of, say, not wanting to “be perceived” online, I still post and do so under the assumption that people will see it, and hopefully they’ll appreciate it in some way, too. What Horning suggests, if I understand him correctly, is that this conformity to algorithmic culture and architecture reframes social relations as fundamentally an act of sorting.

This is, in various ways, Sociology 101 — the theory of social sorting is a mainstay in the field, though more recently it can be centralized within David Lyon’s arguments post-9/11 on the surveillance state as the prime example of this sort of datafied social organization. This has to do with profiling, especially for law enforcement or the military, and other prejudices explained away through demographic data collection and evaluation. Simply put, during the War on Terror, it became a mundane part of everyday life to be segmented in various (perhaps endless) ways to be exploited, either by the state or, increasingly, by data brokers looking to sell.

Of course, we have always categorized other people. It is a part of human nature. The difference is largely technological, as the ability to gather (seemingly) infinite data on so many people from so many inputs and sort them in so many ways modifies the scale of influence, but it’s also, of course, deeply political. This logic, it seems clear enough, has structured the development of new technologies, especially ones that make data brokerage their bread and butter: social media platforms. With ad markets evermore interested in novel and “innovative” ways to target consumers, all this data gains more value, and what we like and what we buy is itself bought and sold, a constant circulation of non-selves, just abstract points of relational data.

Without putting too fine a point on it, then, we can and probably should understand the imperative and incentive to conform to the algorithm as a direct result of this surveillant logic. As Lyon explained, way back in 2002:

Social sorting places the matter firmly in the social and not just the individual realm – which “privacy” concerns all too often tend to do. Human life would be unthinkable without social and personal categorization, yet today surveillance not only rationalizes but also automates the process. (pg. 13)

It goes without saying, but this has only intensified greatly in the years since. Perhaps implicitly, Horning connects all this to Anna Kornbluh’s apparent argument in her new book, Immediacy, or The Style of Too Late Capitalism. I haven’t read it yet, but Horning summarizes for us: “immediacy as a style generally betokens a refusal of mediation and critical distance in favor of what purports to be direct experience of reality,” and then, quoting Kornbluh, platforms thus work to “eradicate the gap between representation and presentation, appearance and phenomena, ‘telling it like it is’ and ‘it is what it is.’” I obviously have to read this book lol, but this brief window inside matches up with how I’m thinking about the social relations that have developed on platforms like TikTok, which prioritize a sense of immediacy that traffics in a perceived authenticity that is, if I may, an outgrowth of the rationalizing and automating processess of datafied social sorting. In other words, as Lyon pointed out, this system exists not only to “check and monitor behavior” or to “influence persons and populations” — the bugbears of privacy and surveillance we spend most of our time worrying about, understandably — but to “anticipate and pre-empt risks,” to be as well-positioned as possible, as social beings or as economic phenomena (and there is no distinction, it seems), in the market.

Hear me out, but I also think Karen Gregory and myself are getting at something similar in our just-published article in The Sociological Review about Twitch streamers who broadcast themselves sleeping as a play to gain new subscribers and make more money. Our argument, in a sentence, is that this platformized impulse reflects a general cultural-economic alignment with the dream of passive income, the ultimate goal and lifestyle of the asset manager, the angel investor, the financial class writ large. Our argument has a lot to do with Randy Martin’s theory of social derivatives, and I won’t go on about that, except to say that, again, it’s about a financialization of the self — one’s body, one’s thoughts and ideas, one’s everyday activities — that takes advantage of existing platforms or technologies to make a living in an environment where, as Kornbluh put it, there is no difference anymore between representation and presentation, or between appearance and phenomena. It’s all content sludge, sure, but it’s also the only viable strategy to be seen and recognized as a person/user/wotker/set of relational data points.

Listen, I’m just riffing a bit as I try to draw all these connections, but I do think there’s something striking about how these concepts of social relations and how they are categorized, especially in an immediate “too late capitalist” era of platforms and data extraction, come to be understood on a broader level.

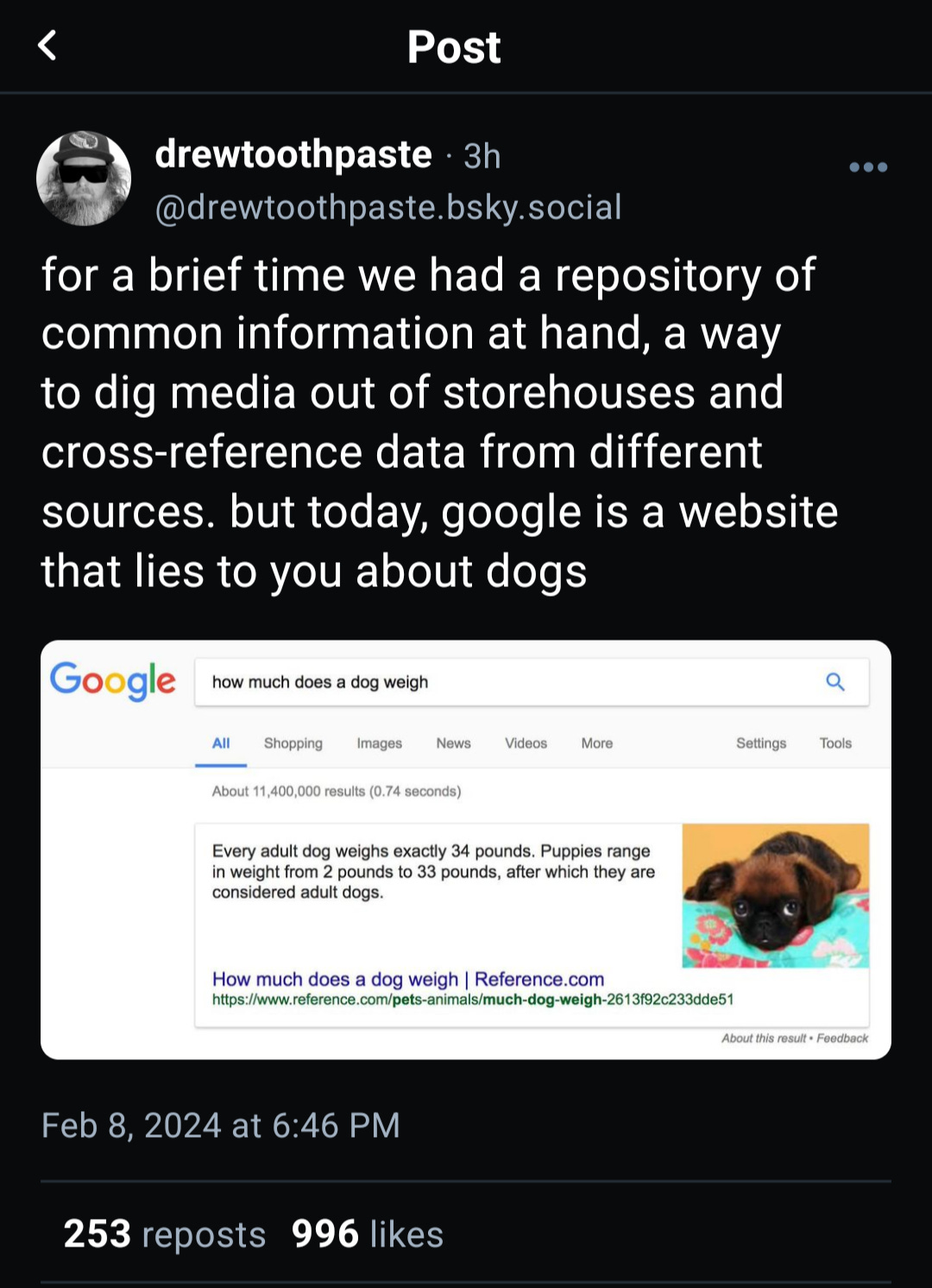

Again, as Horning points out in the quotation that opens this newsletter, the issue with so much pop analysis of online life like Chayka’s new book on algorithms is that is accepts as a given that we all emulate them, and the inquiry ends there, or rather it settles for a narrative that serves certain cultural purposes about, say, threats to democracy or the news media. There is a kernel of truth here, naturally, because these systematized methods of social sorting certainly powers large swaths of online radicalization, mis/disinformation, and polarization. But (and sorry to quote our own writing), as Karen and I put it, the livestreaming of sleep and likewise much of this algorithm conformity in general “is a dream of accessing the circulation of value through the design of the platform.” The way to do that is to sort aesthetics, identities, ideas, and life experiences according to a logic that is inhuman. But I’m sure it’s fine.

Ephemera

I’ll just plug once more the article Karen and I wrote while I was in Edinburgh. Really proud of how it turned out, and it was such a useful process for clarifying much of what I’ve been thinking about when it comes to how all these social and economic aspects intertwine. Read it here.

Brian Merchant breaks down the (kinda) surging New Luddite movement: “The new Luddites—a growing contingent of workers, critics, academics, organizers, and writers—say that too much power has been concentrated in the hands of the tech titans, that tech is too often used to help corporations slash pay and squeeze workers, and that certain technologies must not merely be criticized but resisted outright.”

The days for Pitchfork as we know it are numbered, so here’s a recent reminder of what they could do, with Grayson Haver Currin’s beautiful review of Brian Eno’s Ambient 1: Music For Airports: “The motion of all the music is spectacular, akin to watching the moonlight shimmer from the ocean’s surface at night. Water, however, is the last thing anyone wants to contemplate in an airport or airplane, and that image makes for unease as “2/2” lumbers forward like some pipe organ dirge, guiding you finally to an end that does not begin again. These are those facts that Eno wants us to face, that death is always hovering nearby.”

I have some minor quibbles with Garrison Lovely’s probing article in Jacobin, “Can Humanity Survive AI?”, but it’s a pretty decent long overview of how competing narratives are fighting for cultural purchase right now.

Song Rec: “Collect” by TORRES